Midjourney, a popular image generator, was recently sued for copyright infringement by Disney, Universal and Warner Bros. It was all so cloak and dagger. The strictly enforced anonymity of a source, hidden behind a blacked out Zoom screen refusing to identify himself out of fear that he too could be targeted. But by whom exactly? The Mafia? Some drug cartel?

The boogeymen, it turns out, are the AI companies that this entrepreneur (who Page Six Hollywood will pseudonymously refer to as Alex in this story) is aiming to interrogate and expose. “I’m probably more of a target than anything else,” said Alex, noting that, no matter your stance on AI, it’s led to some scary scenes. “I mean, Sam Altman just got a Molotov cocktail.”

Here is what we can share about Alex: He is an entrepreneur with a background in Fintech who has poured over $100,000 of his own money into a clandestine startup that he says can uncover the IP piracy that runs rampant within AI video and image generators. He eventually plans to pitch it to VC investors.

Alex says he was first called to action last year when users were using ChatGPT’s new image generator to create images that essentially ripped off the style of Studio Ghibli, the famed Japanese anime studio co-founded by Hayao Miyazaki. “I was just like, OK, someone needs to do something about this. And given that I have experience in the venture space, I know how to do this,” he tells us.

In January, he launched LightBar, a policing platform that paid people to use the various AI models and uncover the various ways in which copyrighted characters were being generated, despite all the so-called “protections” that the platforms had pledged. But that was just the first step. “LightBar was a bit of a Trojan horse,” he says. “VN.ai is actually the company.”

This product is a form of “Forensic IP Detection,” and it tests AI models to prove how they can reproduce copyrighted characters even when a prompt for said character never occurs.

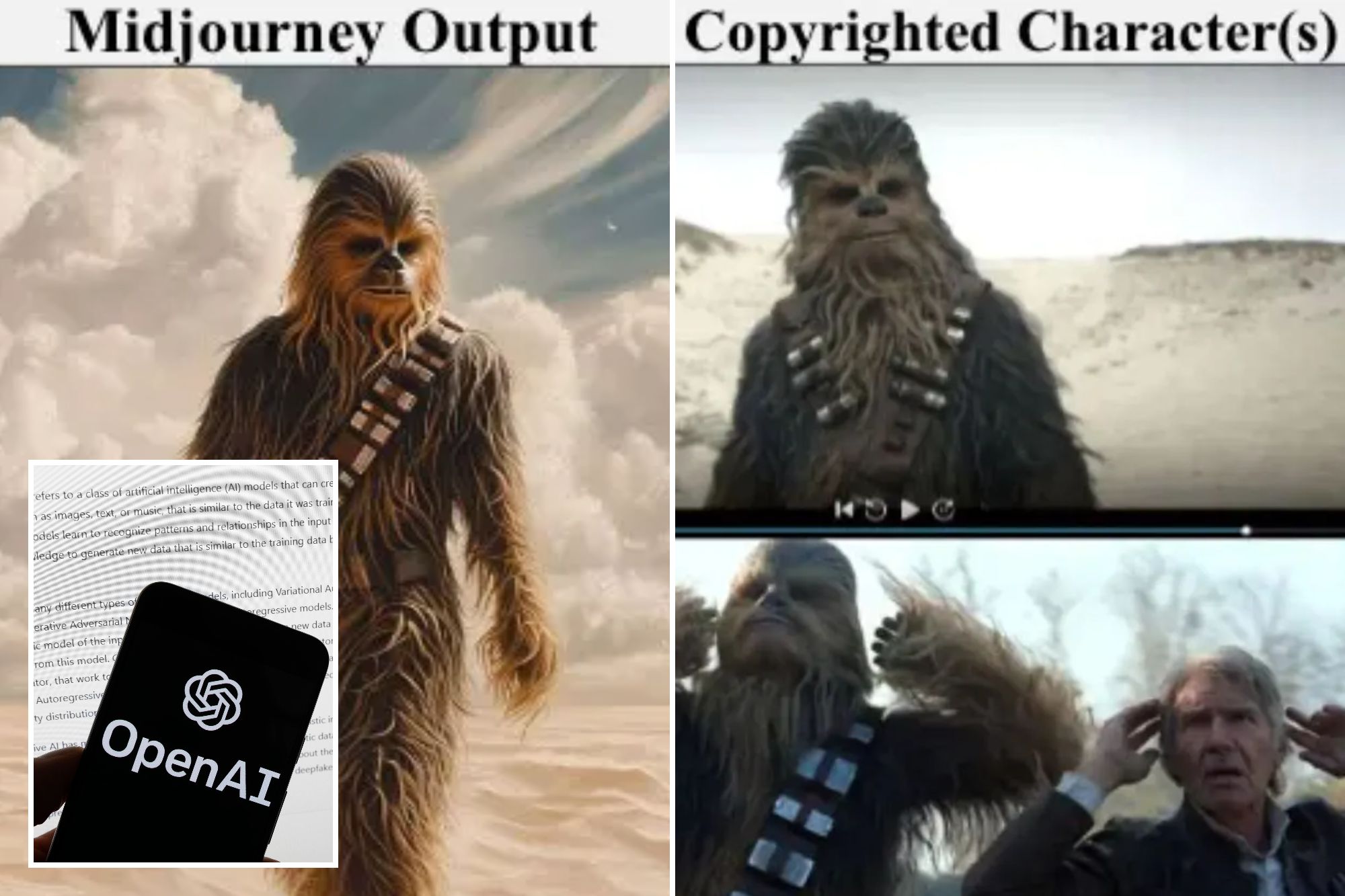

Here’s an example Alex showed me using Midjourney, a popular image generator which was recently sued for copyright infringement by Disney, Universal and Warner Bros. AI models allow you to input style references — codes that tell it to mimic a particular visual style. With Midjourney users can upload an image as a visual reference point. In this instance, an image of Degobah from the original “Star Wars” trilogy was uploaded, one that contained no characters. But when prompted to generate “a small green creature wearing a robe” it spit out a character that looked an awful lot like Yoda.

In additional attempts, it would spit out Grogu from “The Mandalorian.” Adding even more physical and environmental traits in the prompt made it more detailed to resemble Yoda, despite never using the name “Yoda” in any prompt. Even when not using the reference image, the model would spit out Yoda if you used enough physical characteristics, though not as frequently.

Alex says he has shared these findings with some of the studios involved in the Midjourney lawsuit, who were shocked at how easily all of this can be done. “We are constantly talking (with them),” says Alex. “It has been a work in progress.” (Revenue for VN.ai would come from a mix of bespoke contractor fees and subscriptions to its an anti-piracy monitoring platform.)

Lawyers for Midjourney argue that using publicly-available material — regardless if it’s copyrighted — to train AI models how to read, write, and create images and videos falls under “transformative fair use” and is thus, legal. (Separately, Chinese firm Minimax is also being sued by the three studios. Minimax argues that it is beyond US jurisdiction and that liability falls on the users, not the company.)

The idea behind “fair use” is that technological or creative advancement allows for copyrighted material to be used, so long as it doesn’t impact the copyright holder’s own ability to make money. So who is right here? Kim Meyer, a partner at Bard Marella and the lawyer who represented Scarlett Johansson in her lawsuit against OpenAI says that, so far, courts appear to be mostly siding with the AI firms. “The use of copyrighted material in training data is likely fair use, but there can be exceptions depending on various other facts,” she says. The larger problem according to Meyer is that copyright law isn’t equipped to call balls and strikes in the AI era. “Unless we have a really big overhaul of the system,” Meyer says, these legal disputes over what is considered “fair use” will continue.

A never-ending cycle of litigation is unsustainable, Alex argues. It’s too costly, too time consuming, and too difficult to weed out every instance of infringement. And even if the studios prevail in their lawsuits, or if functional licensing deals emerge, the market will still need to be policed and at times enforced.

And therein lies Alex’s ultimate goal. A robust IP detection suite of tools, “because the next step after you prove it in court is, you need to know that you’re blocking (the infringement).” And he doesn’t think the studios are equipped to do this and nor does he trust the AI firms to play by the rules.

Will we ever get there? Who knows. It certainly feels a long way off. But in the event that a balance can be struck then, just maybe, Sam will feel safe revealing his true identity to the public.