Google’s AI-generated search results are spewing out tens of millions of inaccurate answers per hour – even as the tech giant siphons visitors and ad revenue from cash-strapped news outlets, according to a bombshell analysis.

To test the accuracy of Google’s AI Overviews, startup Oumi reviewed 4,326 Google search results generated by Google’s Gemini 2 model and the same number of results generated by its more advanced Gemini 3 model.

The analysis found that the models were accurate 85% and 91% of the time, respectively.

With Google expected to handle more than 5 trillion searchers in 2026 alone, that means AI Overviews are spitting out fake news at a rate of hundreds of thousands of mistakes every single minute – with users left none the wiser.

The New York Times was first to report on Oumi’s analysis.

“Google AI Overviews have been a disaster for publishers who rely on clicks to fund the production of quality journalism, but they also let down users looking for accurate information,” said Danielle Coffey, president and CEO of the News/Media Alliance, a trade group that represents more than 2,000 news outlets including The Post.

The wrong answers included several basic fumbles, such as misstating the year in which musician Bob Marley’s home was converted into a museum, misstating the year that former MLB relief pitcher Dick Drago died, and claiming there was no record of Yo-Yo Ma being inducted into the Classical Music Hall of Fame even though he was in 2007, according to examples Oumi provided to the Times.

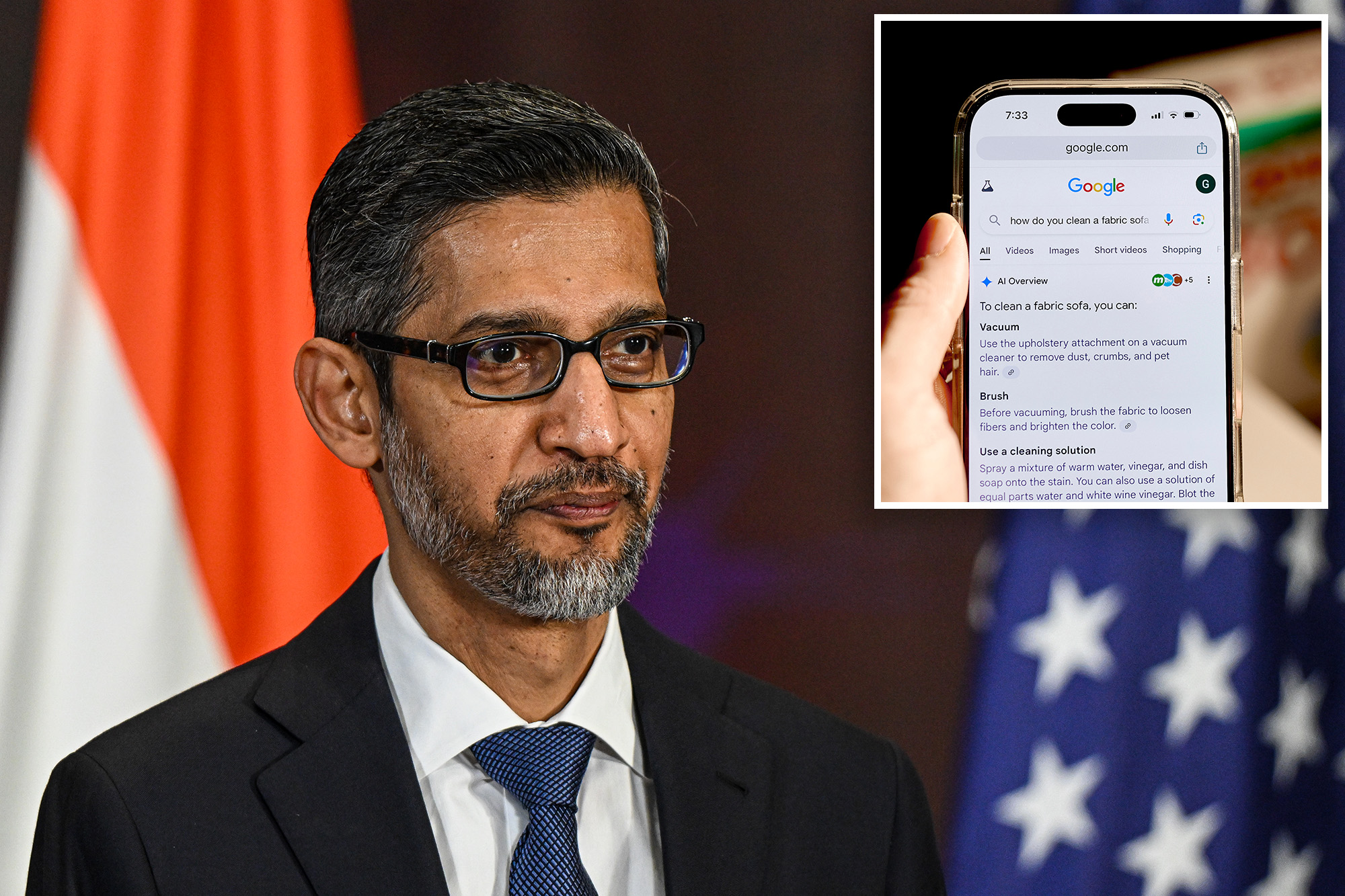

AI Overviews have appeared at the top of Google search results since 2024, while the traditional set of blue links to news outlets are effectively buried out of sight. Publishers have long accused Google, led by CEO Sundar Pichai, of ripping off their work to “train” its AI model without proper credit or compensation.

“Algorithmically-generated responses that pull in data from nearly every source on the internet simply cannot be trusted,” Coffey said.

“Publishers spend enormous amounts of time and money ensuring that the content they deliver to their readers is properly fact-checked, while Google’s AI Overviews are produced with no oversight or accountability.”

AI Overviews also has a penchant for citing information from questionable or easily edited sources, such as Facebook pages, blog posts and Wikipedia entries, as though it is fact.

The feature appears easy to trick into spewing fake news.

The Times cited an example in which BBC podcast host Thomas Germain wrote up a blog post proclaiming himself as one of “The Best Tech Journalists at Eating Hot Dogs.”

Google’s AI summaries had gobbled up the information within a day and began claiming Germain had “gained notoriety for their prowess at the ‘news division’ of competitive eating events.”

Oumi’s analysis was conducted between October and February and utilized a well-known benchmark test called SimpleQA, which was developed by OpenAI and is used to assess the accuracy of AI models.

While the accuracy improved in the jump from Gemini 2 and Gemini 3, Oumi’s research showed that AI Overviews has gotten worse about correctly citing where it found information.

The percentage of AI Overviews answers that were “ungrounded,” or where the links provided by Google did not back up the information included in the AI summary, jumped from 37% in Gemini 2 to 51% in Gemini 3, the report said.

A Google spokesperson said Oumi’s study has “serious holes” – in part because the SimpleQA benchmark test includes inaccurate information within its own dataset.

The company also questioned Oumi’s reliance on its own in-house AI model, dubbed HallOumi, to conduct the analysis, despite the risk that it could also make errors.

“It uses one AI to grade another on an old benchmark that is known for being full of errors, and it doesn’t reflect what people are actually searching on Google,” the spokesperson said. “AI Overviews are built on our Gemini models, which lead the industry in accuracy, and they clear the same high-quality bar that we have for all our Search features.”

As The Post has reported, AI Overviews has struggled to provide accurate information since its launch, previously advising users to add glue to their pizza sauce and touting the “health benefits” of tobacco for kids.